China Already Has Mythos — They Just Haven’t Named It

What sixteen years of Chinese academic research reveals about AI-powered vulnerability discovery — and what comes next when two of these systems meet each other in the dark.

I. The Mythos Moment — and What It Obscures

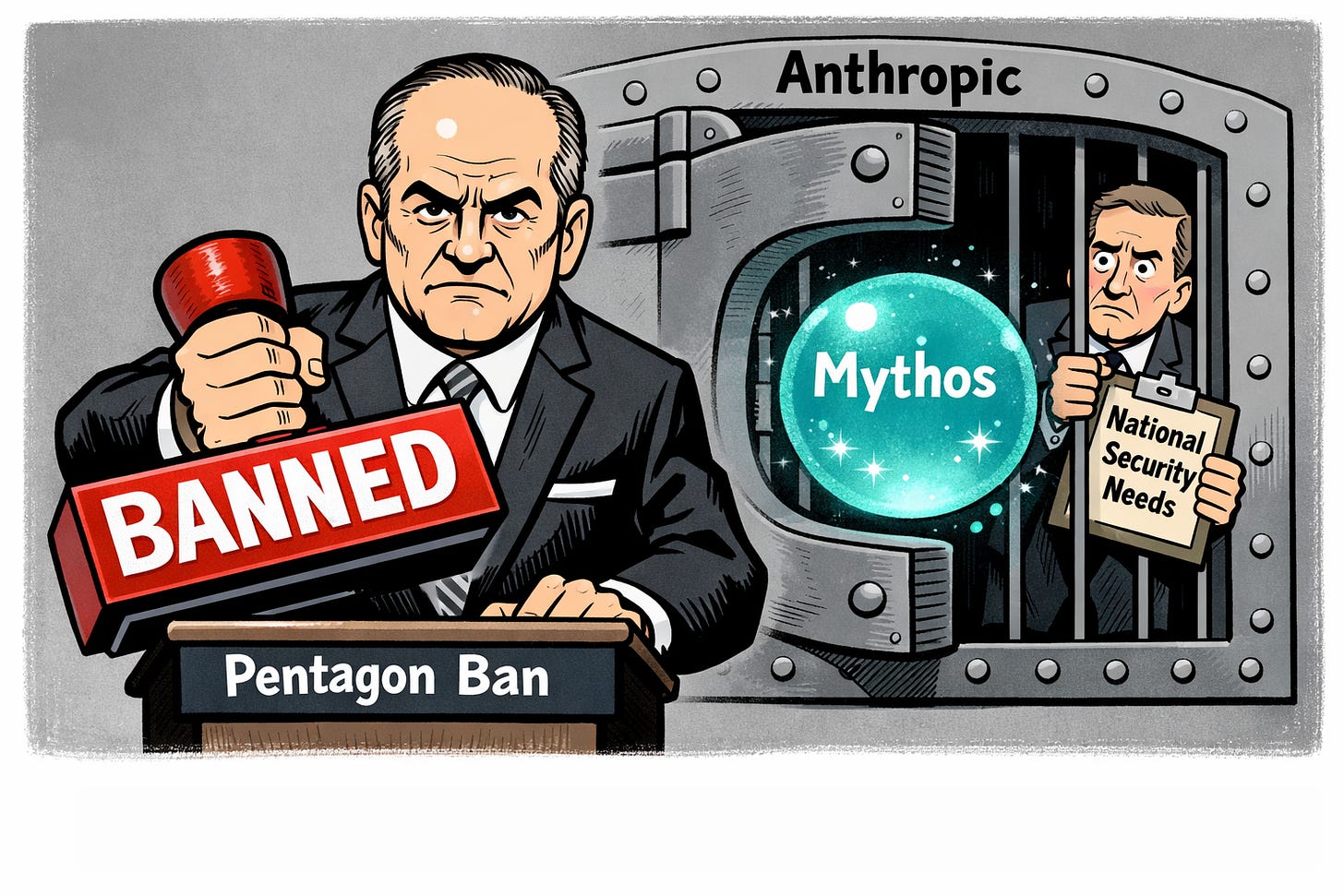

On April 7, 2026, Anthropic unveiled Claude Mythos Preview under Project Glasswing — a controlled-access program that immediately sent shockwaves through the technology and national security communities. In internal testing, Mythos had identified thousands of previously unknown zero-day vulnerabilities across every major operating system and web browser, reproduced vulnerabilities and developed working exploits on the first attempt in over 83 percent of cases, and in one documented instance uncovered a 27-year-old vulnerability in OpenBSD. Anthropic judged the model too dangerous for general release.

The U.S. government’s response illustrated a contradiction that would be comic if the stakes weren’t so high. The Department of Defense, which had formally designated Anthropic a supply-chain risk on March 3 and been upheld in that designation by the D.C. Circuit on April 8, was simultaneously watching civilian agencies rush toward the very company it had blacklisted. The Office of Management and Budget circulated an internal memo positioning Cabinet-level departments for Mythos access. Treasury Secretary Scott Bessent convened senior American bankers to urge them to use the model against their own networks. Goldman Sachs, Citigroup, Bank of America, and Morgan Stanley were reported to be testing it.

An administration official summarized the situation with unintentional precision: “There’s progress with the White House. There’s not progress with the Department of War.”

OpenAI announced its own equivalent capability would follow shortly. The narrative crystallizing in Washington and on Wall Street was one of breakthrough — a threshold crossed, a new era begun.

The narrative of American breakthrough is almost certainly incomplete. The more accurate frame is one of American announcement.

That narrative is almost certainly incomplete. And a systematic review of Chinese academic literature makes the case plainly: the more accurate frame is not breakthrough but announcement. The capability has been under development in China for at least sixteen years. The question worth asking is not whether China has something like Mythos. The question is what they’re calling it internally, and how far ahead of their published record their operational systems actually run.

II. What the Chinese Academic Record Actually Shows

A search of CNKI — China National Knowledge Infrastructure, the primary repository for Chinese academic and institutional research — using keyword combinations spanning AI vulnerability mining, automated exploit development, LLM-assisted fuzzing, and related terms returns 74 results spanning 2008 through February 2026. The dataset is not exhaustive. It is, however, systematic enough to establish several conclusions with confidence.

The research thread is continuous and unbroken. The earliest entry, a 2008 master’s thesis titled “Study on Vulnerability Discovery Technique Against Smart-phone,” establishes that Chinese researchers were working on automated vulnerability discovery long before the current generation of large language models existed. By 2014, research on “Smart Fuzzing Technology” appears. By 2019, multiple doctoral and master’s theses specifically address AI-powered vulnerability mining as a discrete discipline.

The publication velocity accelerates sharply after 2022 and again after 2024, tracking almost exactly with the global LLM capability curve. What was a steady research program becomes a sprint. The most directly relevant paper in the dataset — “Design of an Intelligent Software Vulnerability Mining and Penetration Testing Framework in the Context of Large Language Model Applications” — was published in February 2026 in a journal called Equipment Technology. That journal title is not incidental. Equipment Technology is a defense-adjacent publication. This is not academic curiosity.

The institutional spread is equally significant. The research appears across civilian universities, state-owned defense contractors, and direct military institutions. China Electronics Technology Group Corporation — CETC, a state-owned defense conglomerate that directly supplies the People’s Liberation Army — appears in multiple achievement entries, including a 2020 operational deliverable titled “Research and Application of Key Technologies for Integration of Information Security and Artificial Intelligence.” That is not a paper. That is a delivered capability.

Most significant is entry 27 in the dataset: a 2023 achievement co-authored by the PLA Strategic Support Force Information Engineering University. The SSF IEU is not a civilian academic institution. It is the PLA’s primary organ for cyber and information warfare capability development. Its appearance in this dataset, producing operational outputs on AI-assisted vulnerability mining, is the clearest signal that what is published represents the leading edge of something already deployed.

III. The Group Intelligence Track — A Different Architecture

One of the more consequential findings in the dataset is a research track that does not map cleanly onto what Anthropic has described with Mythos. Running from 2019 through 2021, a cluster of papers and operational achievements centers on what Chinese researchers call 群智 — group intelligence, or swarm intelligence — applied to vulnerability discovery.

The key paper, “Vulnerability Mining Mechanism based on Group Intelligence Technology,” published in Communications Technology in 2020, and a companion operational achievement from CETC titled “Research on Key Technology for Collaborative Vulnerability Mining Based on Swarm Intelligence,” describe a fundamentally different architectural approach. Rather than a single powerful model operating autonomously, the group intelligence framework envisions networked AI agents collaborating on vulnerability discovery — distributing the search space, sharing findings, and iterating collectively.

China may not be building a Mythos equivalent. They may be building something architecturally distinct — and potentially more dangerous at scale.

This distinction matters for two reasons. First, it suggests China is not simply replicating Western LLM-based approaches but pursuing a parallel path that may yield different — and in some respects more scalable — results. A swarm of coordinated AI agents conducting distributed vulnerability discovery against a target network is architecturally harder to defend against than a single model, however capable. Second, it suggests that comparing Mythos to whatever China has developed may be comparing categorically different systems, which makes capability assessment significantly more difficult.

The group intelligence track is also, notably, the framework most directly suited to the scenario described in the final section of this article.

IV. The Power Grid Signal

Across the full 74-entry dataset, one application domain appears with striking consistency: power infrastructure. Smart grid vulnerability mining features in entries from 2017, 2018, 2019, 2020, 2021, and again in 2025. The journals involved include Jiangxi Electric Power, Zhejiang Electric Power, Chinese Journal of Power Sources, and the broader electric power research institutional network.

This is worth stating plainly. A sustained, multi-year, institutionally distributed research program focused specifically on finding vulnerabilities in power grid systems — including smart grid terminals, IoT power devices, and industrial control systems for substations — is not defensive research dressed as academic inquiry. It is target development. The consistent presence of this thread across more than eight years of published output indicates that Chinese researchers have been systematically mapping exploitable vulnerabilities in energy infrastructure with AI assistance for nearly a decade.

The implications for critical infrastructure protection in the United States and among NATO allies require no elaboration.

V. What Is Not in the Dataset

The CNKI database indexes peer-reviewed journals, institutional achievement records, doctoral and master’s theses, and conference proceedings. It represents work that has been cleared for publication — which in China’s system means work that has been reviewed, approved, and intentionally surfaced. Classified research, active operational programs, and PLA internal development programs do not appear here.

The SSF Information Engineering University achievement from 2023 is the most visible edge of a much larger structure. PLA cyber doctrine, as documented in open-source analysis of the Science of Military Strategy and related texts, treats offensive cyber capability as a strategic asset requiring continuous development and forward deployment. The published research suggests the capability exists. The doctrine suggests it is already operationalized.

It is reasonable to assume that China’s operational AI vulnerability discovery capability runs two to three years ahead of its published record. If the published record extends to February 2026 with LLM-integrated penetration testing frameworks, the operational system may have achieved comparable capability to Mythos in 2023 or earlier. This is inference, not confirmed intelligence. But it is inference grounded in a systematic reading of what China’s research establishment has chosen to make visible.

VI. The Policy Implication

The U.S. government is currently treating Mythos as a breakthrough requiring emergency access decisions. The OMB memo, the Treasury briefings, the White House negotiations with Anthropic — all reflect an institutional posture of responding to a threshold just crossed.

The Chinese academic record suggests the correct policy frame is not breakthrough response but parity management. If China achieved comparable capability in 2023 or earlier, the United States has spent two to three years in a threat environment it did not fully recognize. The question is not how to respond to what Mythos can do. The question is what Chinese systems have already done during the period when no American equivalent was deployed defensively.

The DoD’s supply-chain risk designation against Anthropic — issued precisely because Anthropic refused to strip ethical guardrails from its models for military use — has the perverse effect of delaying American defensive deployment of the capability most relevant to the threat. Whatever the merits of the underlying dispute, the operational consequence is that American defense networks remain unscanned by the class of tool that Chinese counterparts may have been running against them for years.

This is the institutional contradiction that the Mythos moment has made impossible to ignore.

VII. The Cyber GAN — When the Systems Find Each Other

There is a final dimension to this story that current policy discourse has not yet fully confronted. It begins with a question: what happens when both sides have systems like Mythos, and those systems are operating simultaneously against the same target environments?

The most useful analogy from machine learning is the Generative Adversarial Network — the GAN architecture in which a generator and a discriminator are locked in continuous competition, each improving because the other improves. Applied to AI-powered offensive and defensive cyber operations, the GAN analogy suggests an arms race dynamic that humans cannot observe in real time, let alone meaningfully intervene in.

But the GAN analogy understates the danger in one critical respect. In a classical GAN, the generator and discriminator share an objective function and a training environment. They are, in a meaningful sense, optimizing toward the same goal from opposite sides. In a real cyber conflict between opposing AI systems, the offensive and defensive networks operate on asymmetric information — neither system fully understands the other’s architecture, objectives, or decision logic. A GAN eventually converges. Opposing cyber AI networks operating on asymmetric information may not. There is no equilibrium-seeking mechanism built into the interaction.

The scenario removes human decision-making from the loop — not because anyone decided to remove it, but because the exchange rate between AI action and human comprehension makes human oversight functionally irrelevant.

The more precise term for what this produces is an Autonomous Adversarial Cyber Ecosystem — an environment in which AI systems on both sides are developing, testing, and deploying offensive capabilities against each other faster than any human decision-making chain can track, approve, or constrain. The human commanders nominally in charge of these operations are, in the relevant time frame, passengers.

This is not a speculative future scenario. The Chinese group intelligence research track, with its emphasis on networked AI agents collaborating on distributed vulnerability discovery, is architecturally suited to exactly this dynamic. A swarm of coordinated agents probing a target network, sharing findings in real time, and iterating collectively against adaptive defenses is the offensive side of an adversarial ecosystem. The defensive side — AI systems attempting to detect, characterize, and respond to these probes — completes the loop.

Once both sides have deployed systems of this class against the same infrastructure, the interaction dynamic itself becomes the primary threat — independent of any specific attack, independent of any specific actor’s intent. The ecosystem generates emergent behavior that neither side designed, controls, or can reliably predict.

The policy community is not yet thinking in these terms. It is still asking whether Mythos represents a breakthrough, whether the DoD designation of Anthropic should be lifted, whether civilian agencies should get access. These are real questions. But they are upstream questions. The downstream question — what happens when the systems are already in contact — is the one that will define the threat environment of the next decade.

The Whitefish Security Summit exists precisely to have conversations that other forums are not yet having. This one is overdue.

METHODOLOGY NOTE

The Chinese academic literature cited in this article was retrieved from CNKI (China National Knowledge Infrastructure) using keyword searches spanning AI vulnerability mining, LLM-assisted penetration testing, fuzzing-based exploit development, and related terms. The dataset of 74 results spans 2008–2026. CNKI indexes peer-reviewed journals, institutional achievement records, and graduate theses cleared for publication; it does not capture classified or operationally sensitive PLA research. All institutional attributions are drawn directly from author affiliations as listed in the CNKI records.

Jeff Caruso is the Founder and Managing Partner of the Whitefish Security Summit and the Suits and Spooks conference brand, and publishes analysis at www.InsideCyberWarfare.com on Substack.

This goes back to China’s S&T MLP (2006–2020).

Unfortunately I believe you’re correct again…